Documentation Index

Fetch the complete documentation index at: https://docs.hydra.so/llms.txt

Use this file to discover all available pages before exploring further.

- Unlock realtime analytics on existing Postgres deployments

- Unparalleled infrastructure control

- Best price:performance of any analytics database on AWS, GCP, and Azure.

- Automatically managed Hydra versions, build & run dependencies, operating system, and platform architecture using our postgres extension manager, pgxman.

- 1 minute to setup!

Curl:

curl -sfL https://install.pgx.sh | sh -Homebrew:

brew install pgxman/tap/pgxmanStep 2: Install the Hydra Packagepgxman install hydra_pg_duckdbStep 3: Add configuration for Hydra to your Postgres config file at: /etc/postgresql/{version}/main/postgresql.confAdd Setting:shared_preload_libraries = 'pg_duckdb'duckdb.hydra_token = 'fetch token at http://start.hydra.so/get-started' Fetch an access token for free from the URL above and paste it in.Step 4: Restart Postgres and Create extensionsudo service postgresql restartcreate extension pg_duckdb;🎉 Installation Complete! Next, check out the Quick Start guide to start using Serverless Analytics on Postgres.

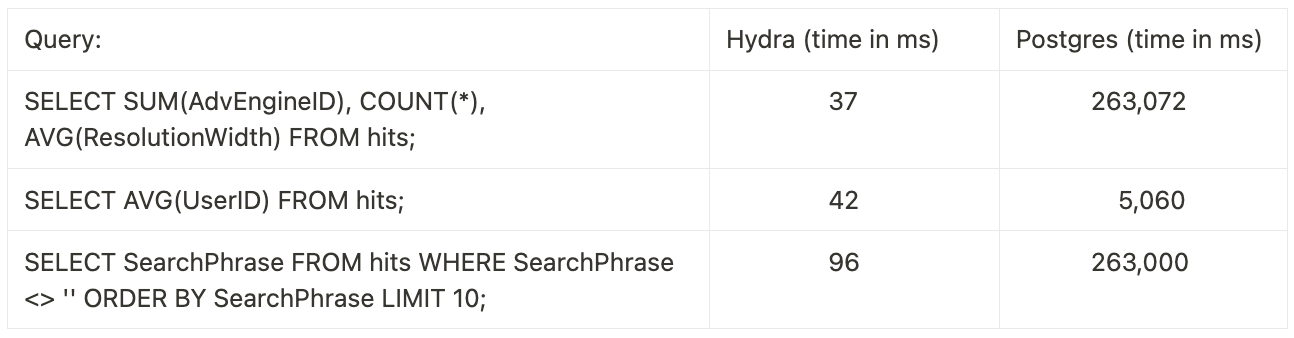

| Database Name | Relative time (lower is better) |

|---|---|

| Hydra | 1.51 |

| ClickHouse (c6a.4XL) | 1.93 |

| Snowflake (128x4XL) | 2.71 |

| Redshift (serverless) | 2.80 |

| TimescaleDB | 16.76 |

| AlloyDB | 34.52 |

| AWS Aurora | 277.78 |

| PostgreSQL (indexes) | 35.20 |

| Postgres (no indexes) | 2283 |

- Storage Auto Grow - Never worry about running out of bottomless analytics storage.

- 10X Data Compression - Data stored in analytics tables benefit from efficient data compression of 5-15X and is ideal for large data volumes. For example: 150GB becomes 15GB with a 10X compression.

- Automatic Caching - Managed within Hydra to enable sub-second analytics, predictably.

- Multi-node Reads - Connect n# of Postgres databases with Hydra to Deep Storage. Ideal for organizations that read events, clicks, traces, time series data from a global source of truth.

-

Parallel, vectorized execution (benchmark)

.png?fit=max&auto=format&n=qsYyo-rksckbkjwh&q=85&s=766329ffb359ba92db9b1916c124f600)

- Compute Autoscale - Hydra scales compute automatically per query to ensure preditably quick efficient processing of analytical queries.

- Isolated Compute Tenancy - Processes run on dedicated compute resources, improving performance by preventing resource contention. This update enhances performance reliability and preditability through scale.

- Support using Postgres indexes and reading from partitioned tables.

- The

AS (id bigint, name text)syntax is no longer supported when usingread_parquet,iceberg_scan, etc. The new syntax is as follows:

- Add a

duckdb.queryfunction which allows using DuckDB query syntax in Postgres. - Support the

approx_count_distinctDuckDB aggregate. - Support the

bytea(aka blob),uhugeint,jsonb,timestamp_ns,timestamp_ms,timestamp_s&intervaltypes. - Support DuckDB json functions and aggregates.

- Add support for the

duckdb.allow_community_extensionssetting. - We have an official logo\\\! 🎉

- Allow executing

duckdb.raw_query,duckdb.cache_info,duckdb.cache_deleteandduckdb.recycle_dbas non-superusers.

- Correctly parse parameter lists in

COPYcommands. This allows usingPARTITION_BYas one of theCOPYoptions. - Correctly read cache metadata for files larger than 4GB.

- Fix bug in parameter handling for prepared statements and PL/pgSQL functions.

- Fix comparisons and operators on the

timestamp with timezonefield by enabling DuckDB itsicuextension by default. - Allow using

read_parquetfunctions when not using superuser privileges. - Fix some case insensitivity issues when reading from Postgres tables.

- Support for reading Delta Lake storage using the

duckdb.delta_scan(...)function. - Support for reading JSON using the

duckdb.read_json(...)function. - Support for multi-statement transactions.

- Support reading from Azure Blob storage.

- Support many more array types, such as

float,numericanduuidarrays. - Support for PostgreSQL 14.

- Manage cached files using the

duckdb.cache_info()andduckdb.cache_delete()functions. - Add

scopecolumn toduckdb.secretstable. - Automatically install and load known DuckDB extensions when queries use them. So,

duckdb.install_extension()is usually not necessary anymore.

- Improve performance of heap reading.

- Bump DuckDB version to 1.1.3.

- Throw a clear error when reading partitioned tables (reading from partitioned tables is not supported yet).

- Fixed crash when using

CREATE SCHEMA AUTHORIZATION. - Fix queries inserting into DuckDB tables with

DEFAULTvalues. - Fixed assertion failure involving recursive CTEs.

- Much better separation between C and C++ code, to avoid memory leaks and crashes (many PRs).

SELECTqueries executed by the DuckDB engine can directly read Postgres tables. (If you only query Postgres tables you need to runSET duckdb.force_execution TO true, see the IMPORTANT section above for details)- Able to read data types that exist in both Postgres and DuckDB.

- If DuckDB cannot support the query for any reason, execution falls back to Postgres.

- Read and Write support for object storage (AWS S3, Azure, Cloudflare R2, or Google GCS):

- Read parquet, CSV and JSON files:

SELECT n FROM read_parquet('s3://bucket/file.parquet') AS (n int)SELECT n FROM read_csv('s3://bucket/file.csv') AS (n int)- You can pass globs and arrays to these functions, just like in DuckDB

- Enable the DuckDB Iceberg extension using

SELECT duckdb.install_extension('iceberg')and read Iceberg files withiceberg_scan. - Write a query — or an entire table — to parquet in object storage.

COPY (SELECT foo, bar FROM baz) TO 's3://...'COPY table TO 's3://...'- Read and write to Parquet format in a single query COPY ( SELECT count(*), name FROM read_parquet(‘s3://bucket/file.parquet’) AS (name text) GROUP BY name ORDER BY count DESC ) TO ‘s3://bucket/results.parquet’;

- Read parquet, CSV and JSON files:

- Query and

JOINdata in object storage/MotherDuck with Postgres tables, views, and materialized views. - Create temporary tables in DuckDB its columnar storage format using

CREATE TEMP TABLE ... USING duckdb. - Install DuckDB extensions using

SELECT duckdb.install_extension('extension_name'); - Toggle DuckDB execution on/off with a setting:

SET duckdb.force_execution = true|false

- Cache remote object locally for faster execution using

SELECT duckdb.cache('path', 'type');where- ‘path’ is HTTPFS/S3/GCS/R2 remote object

- ‘type’ specify remote object type: ‘parquet’ or ‘csv’